Digital Sovereignty in the Age of AI

When companies adopt artificial intelligence, the primary emphasis is usually on its functionality; concerns regarding digital sovereignty take a backseat. However, few technologies influence data, processes, and infrastructure as significantly as AI.

This poses challenges for decision-makers: those who are reluctant to embrace AI may face competitive setbacks. Conversely, those who rush into action may find themselves with limited flexibility. Additionally, those who fail to actively manage the process may unintentionally give up more control than they planned. Digital sovereignty enables a deliberate approach to managing dependencies within this complex landscape, ensuring that strategic choices remain available.

This article organizes the concept of sovereignty related to AI across essential dimensions, clarifies potential dependencies, and provides organizations with guidance on AI utilization.

What dangers to digital sovereignty emerge from AI?

The implementation of artificial intelligence is evolving more rapidly than past technological advancements. The urgency is heightened for decision-makers due to factors like speed, cost benefits, and escalating market demands. Concurrently, a handful of global companies are leading the market for robust generative AI systems. To stay competitive, many are quick to revert to tried-and-true solutions.

This concentration results in companies relying heavily on model operators, cloud infrastructures, and data sources that they cannot access directly. As a consequence, sensitive company information might find its way into environments where security measures and usage terms are beyond their control. A frequently overlooked danger is that employees often use public AI systems like ChatGPT independently – sometimes without the company's awareness or consent. This situation can result in the unintentional leakage of sensitive data, often going unnoticed.

Furthermore, the legal, social, and political framework for AI implementation remains inadequately established. Organizations are required to formulate their AI strategies within a perpetually evolving context, tackling risks that are not entirely predictable at this moment.

Sovereignty allows for flexibility.

This is exactly why digital sovereignty is vital: it empowers businesses to consciously shape dependencies in AI implementation, recognize control needs early on, and preserve their ability to adapt even as circumstances evolve.

Those who prioritize digital sovereignty from the beginning and intentionally establish clear protocols for AI usage gain three significant benefits:

Orientation: Employees understand what is important – even during uncertain times.

Trust: Customers and partners see recognize clear lines, beyond mere short-term gains.

Resilience: Organizations that consciously shape their future today can adapt more readily to changes in the future.

Contextual discussion: What does digital sovereignty mean?

The term “digital sovereignty” has emerged as a key concept in strategy papers, government statements, and board discussions. As IT environments grow more complex, the focus is on creating infrastructures that allow for self-determination, enhancing the resilience and responsiveness of organizations.

The key is to strike a balance between independence, cost-effectiveness, and performance. Complete self-sufficiency isn't required, but it's crucial to make informed decisions about acceptable dependencies and those that aren't. Conducting a thorough assessment of the current situation and goals is beneficial in this context. It raises awareness of the areas where an organization is most at risk and aids decision-makers in effectively establishing digital sovereignty.

Digital sovereignty in the context of AI

Digital sovereignty regarding artificial intelligence does not imply restricting AI or shunning foreign providers. Instead, it involves asserting control, maintaining the ability to act, and making risks transparent. This can be accomplished by selecting the appropriate provider, structuring the infrastructure effectively, or establishing clear governance rules. Only through these means can AI be utilized in a responsible manner.

How can AI sovereignty be implemented?

There are no one-size-fits-all solutions for digital sovereignty. Depending on the organization's size and sector, the areas of focus can vary significantly – leading to direct implications for architecture, contracts, and documentation needs.

Large corporations possess the means to create and train their own models. This capability enables them to strategically enhance their technological sovereignty, establish specialized teams, and maintain the ability to develop and manage AI systems internally (offline). Their emphasis is on achieving technological sovereignty and independence from outside providers.

SMEs rely increasingly on external solutions. It is essential for them to choose these solutions with care and, most importantly, to maintain governance and data sovereignty. Their emphasis is shifting away from in-house development towards a more controlled approach to integration.

Financial institutions and insurance companies are encountering heightened regulatory requirements. The DORA Regulation, the EU AI Act, and EIOPA guidelines impose stricter control and documentation obligations. Compliance with these regulations is increasingly becoming a crucial factor in achieving digital sovereignty in this sector.

Identifying the right priorities for each use case involves dissecting digital sovereignty into its multiple dimensions. Not every scenario necessitates cloud-based AI systems that possess extensive global knowledge and external interfaces. For numerous use cases – especially those where AI's cognitive capabilities are crucial – offline AI solutions present a robust and sovereign alternative: data stays completely within the organization, and focused fine-tuning enables the incorporation of additional expert knowledge – resulting in much greater control and reduced reliance on external factors.

How can organizations assess their AI sovereignty?

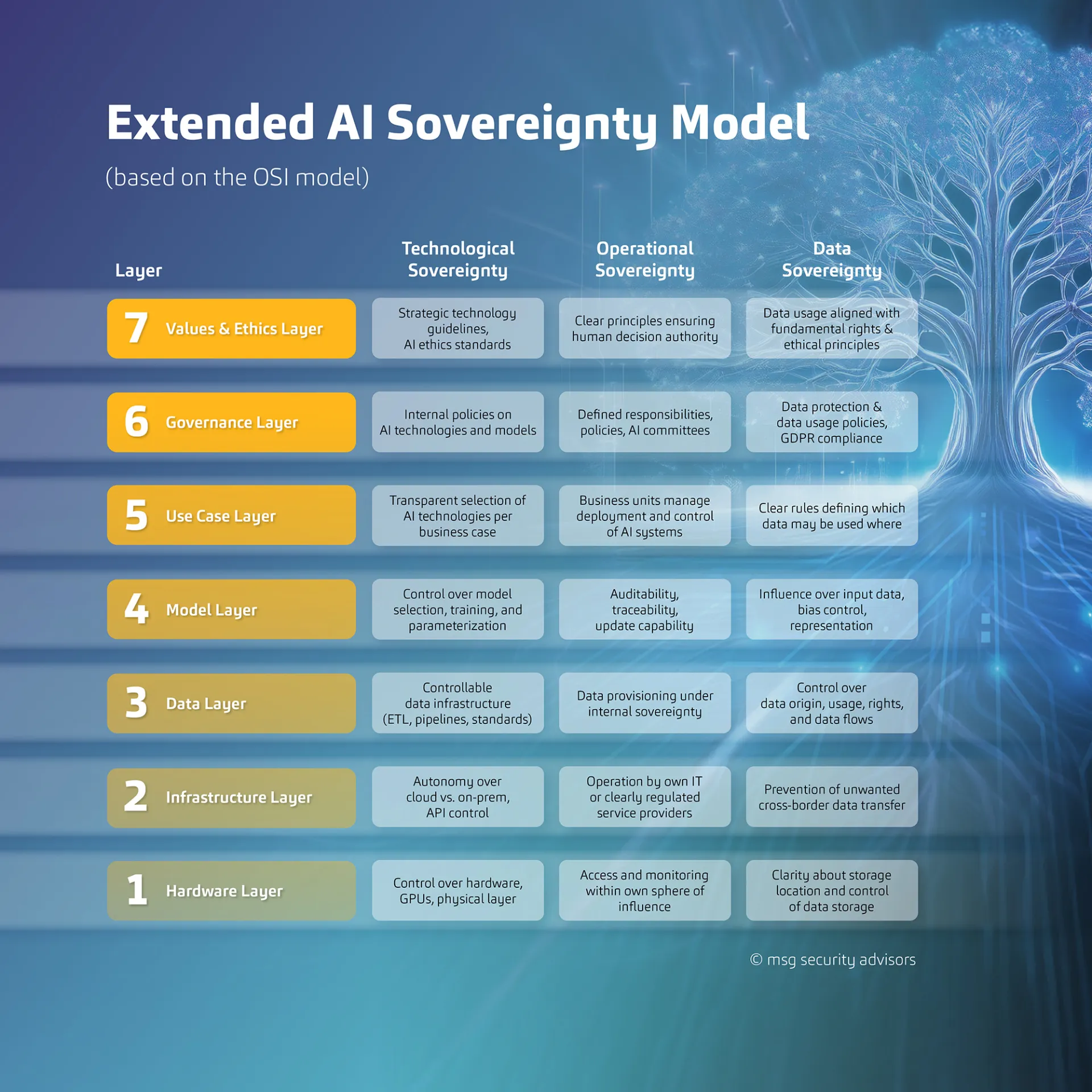

Digital sovereignty consists of three main pillars: Technological sovereignty, operational sovereignty, and data sovereignty Together, these pillars establish a framework for systematically recognizing and managing dependencies effectively. In the context of AI utilization, the following questions are addressed through these pillars:

Technological sovereignty

“Do we have sufficient control and freedom of choice when it comes to the AI technology we use?”

The emphasis lies on infrastructure, architecture, software, vendor neutrality, and in-house expertise.

Operational sovereignty

“Are we equipped in terms of organization, personnel, and procedures to operate and/or take responsibility for AI on our own?”

The emphasis is placed on processes, roles, and the autonomy of decision-making within the organization.

Data sovereignty

“Do we have full control over the origin, storage, use, and protection of our data in AI applications?”

This part examines data sovereignty, regulatory safeguards, and the potential risk of unintended data leaks.

The seven levels of AI sovereignty

Sovereignty can be understood through seven interconnected levels under the three pillars:

- Hardware Physical components (chips, servers, physical location)

- Infrastructure Cloud environment, data centers, networks

- Data: Origin, sovereignty, confidentiality

- Model: AI algorithms and their training

- Use case: Specific use cases and integrations

- Governance: Management and control processes

- Values and ethics: Principles for responsible AI usage

These levels assist in recognizing potential risks associated with AI usage and revealing overlooked areas – ranging from physical infrastructure to ethical accountability. This provides organizations with a comprehensive view of their level of control over use cases, models, and governance processes.

The matrix: 3 pillars × 7 levels

Combining the three pillars and seven levels forms a matrix consisting of 21 assessment fields. This allows organizations to perform a nuanced self-assessment and addresses questions like: At which levels do we rely on technology? Where do we lack operational expertise? Where is our data sovereignty threatened? It provides a clear overview of where strategic actions are necessary and establishes a solid foundation for making prioritization decisions. The levels don't need to be tackled in a sequential manner. The crucial part is to pinpoint where immediate action is required for your own organization.

In particular, a company might find that it possesses its own data (level 3) but lacks control over the AI model being utilized (level 5) or the cloud infrastructure in place (level 2).

How AI is used with sovereignty

A medium-sized insurance company implements an external AI solution for automated risk assessment in life insurance. The models used are hosted by a third-party provider, with training data sourced from anonymized customer records. On the surface, the solution seems to be both compliant and effective. Yet, an internal review uncovers a contrasting reality. The insurance company discovers vulnerabilities:

Level 5 (model): No insight into model behavior or decision-making logic

Level 2 (infrastructure): No control over the third-party provider's cloud

Level 6 (governance): Insufficient governance audit

An audit conducted during the AI risk analysis uncovers additional gaps and findings: There is a lack of consistent bias control, the documentation of data flows is incomplete, and the use of public AI models for processing sensitive data relevant to GDPR is essentially prohibited.

The result:

The supervisory authority asks critical questions. The insurance company is required to implement major enhancements promptly – facing tight deadlines and substantial extra work.

The absence of sovereignty is especially critical in heavily regulated sectors like insurance and finance, where sensitive customer information, stringent oversight from DORA and EIOPA regulations, and increasing demands from the EU AI Act converge. Organizations that neglect to ensure transparency in AI-driven decision-making face not just regulatory repercussions but also potential harm to their reputation.

Digitally sovereign companies actively avoid these scenarios. They ensure transparency regarding their dependencies, define clear responsibilities, and formulate exit strategies well in advance of any urgent need.

Shaping AI transformation rather than being led by it

The AI revolution is inevitable and brimming with possibilities. However, it demands a certain mindset, core values, and dependable processes. Most importantly, it necessitates clarity regarding one's own dependencies to effectively create actionable options. Digital sovereignty should not be seen as a hindrance to innovation; rather, it is a strategic asset that needs to be organized and consistently enhanced. Ultimately, if you are unaware of where you are losing control, you cannot reclaim it.

msg advisors assist organizations in conducting sovereignty assessments and provide strategic consulting to enable them to leverage AI digitally with sovereignty, allowing them to reap the benefits of the technology without incurring excessive costs.